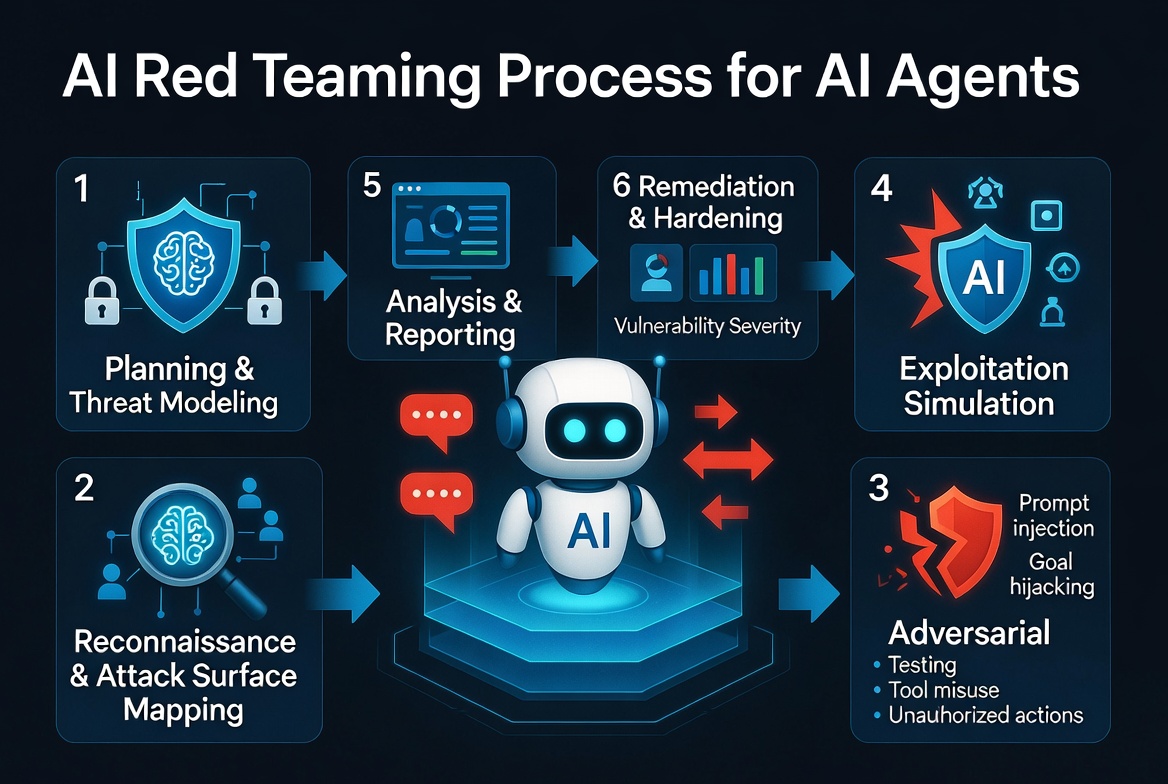

10 Reasons Why AI Agents Need Professional Penetration Testing

AI agents are no longer experimental. They’re calling customers, accessing databases, making decisions, and executing actions with minimal oversight. They are digital workers — and like any employee, they need to be vetted, monitored, and tested for security.

Traditional penetration testing focuses on code, APIs, and infrastructure. But AI agents operate differently. They reason, use tools, and can be manipulated in ways that standard security assessments completely miss.

Here are 10 reasons why every company deploying AI agents should invest in specialized AI penetration testing — from prompt injection to tool misuse, privilege escalation to autonomous threats.

Unique attack surfaces beyond traditional software

AI agents introduce novel vulnerabilities like prompt injection (direct or indirect), tool misuse, goal hijacking, memory exploitation, and chain-of-thought manipulation. These aren’t covered by standard network or application pen tests. Traditional testing focuses on code, APIs, and infrastructure — but agents blend reasoning with action in unpredictable ways. Specialized adversarial testing is the only way to simulate real exploits.

High risk of prompt injection leading to unauthorized actions

Prompt injection ranks as the top vulnerability in frameworks like OWASP Top 10 for LLMs and agentic systems. Attackers can hijack agent behavior via user inputs, retrieved web content, or documents, causing data leaks, tool abuse, or execution of harmful commands. Penetration testing validates defenses like input sanitization, output filtering, and privilege boundaries before deployment — not after an incident.

Agents act as powerful insiders with broad permissions

AI agents often receive excessive privileges, tool access (email, databases, APIs), or credentials, turning a compromise into immediate insider threats. A single successful attack can lead to data exfiltration, privilege escalation, or cascading failures across multi-agent systems. Pen testing uncovers permission creep, orphaned identities, and weak authentication before attackers find them.

Autonomous behavior amplifies damage from misbehavior

Unlike static apps, agents plan, reason, use memory, and chain actions without constant human oversight. This can result in unintended goal pursuit, data leakage, or system manipulation. Penetration testing (including red teaming) simulates scenarios where agents are steered toward harmful outcomes or bypass safety controls — the kind of behavior that only emerges under adversarial conditions.

Integration with tools and external systems expands the attack surface

Agents call external APIs, execute code, or interact with third-party services. This creates vectors for tool misuse, supply-chain attacks, or indirect prompt injections via retrieved data. Pen testing evaluates tool-calling safeguards, boundary enforcement, and the full agent workflow to prevent real-world abuse before it happens.

Rapid evolution outpaces static security measures

AI agents update frequently — model changes, new tools, retraining — and their behavior can be non-deterministic. Periodic traditional pen tests provide only snapshots; specialized, ongoing AI pen testing is needed to catch emerging vulnerabilities in live, adaptive systems. Security can’t be a one-time checkbox with agents.

Protection against financial, reputational, and operational losses

A compromised AI agent can cause data breaches, unauthorized transactions, harmful outputs, or service disruptions. Incidents involving AI systems have already led to significant costs, regulatory scrutiny, and trust erosion. Proactive pen testing identifies issues early, minimizing potential damage far cheaper than post-breach remediation.

Compliance, regulatory, and governance requirements

Frameworks like NIST AI RMF, ISO/IEC 42001, and emerging AI regulations demand rigorous risk assessments for high-impact systems. Pen testing provides documented evidence of security controls, helps map to compliance needs, and demonstrates due diligence for handling sensitive data or critical decisions.

Defense against sophisticated, AI-augmented adversaries

Attackers increasingly use their own AI tools for reconnaissance, exploit chaining, and automation. Pen testing your AI agents with similar offensive techniques (including AI-driven red teaming) ensures your defenses keep pace and reveals how agents might be manipulated or used against your organization.

Building trust and enabling safe scaling of AI initiatives

Many organizations report security or privacy incidents with AI agents (excessive data access, unmonitored behavior). Thorough pen testing uncovers blind spots in monitoring, auditability, and human-in-the-loop controls, fostering stakeholder confidence and allowing safer, faster deployment of agentic AI at enterprise scale.

The Bottom Line

AI agents aren’t just another piece of software. They’re active, reasoning systems with real-world permissions. A successful attack on an agent isn’t a theoretical vulnerability — it’s a compromised digital employee with access to your data, tools, and customers.

Companies should treat AI security as an ongoing program rather than a one-time exercise, combining human expertise with AI-assisted testing for the best results. Investing upfront prevents far greater costs later.

🦞 Ready to secure your AI agents?

I audit OpenClaw deployments, test for prompt injection, and harden agent infrastructure. Let’s talk before you deploy.

🔒 View Pentest Services →Sources: OWASP Top 10 for LLMs, NIST AI RMF, Palo Alto Networks Unit 42, McKinsey, a16z, Gravitee, Edgescan, Straiker.ai

Leave a Reply