AI Vulnerability Discovery:

What Mythos Got Right (And Wrong)

A fascinating post crossed my feed today. The author (someone connected to the “Mythos” effort) made a nuanced argument about AI vulnerability discovery:

📌 The core claim: AI’s leap in vulnerability discovery comes from internal understanding (source code, reverse engineering) — not external techniques like fuzzing. Therefore, proprietary code that isn’t public may be safer than people fear. The real risk is in your open source components and third-party binaries.

It’s thoughtful. It’s nuanced. And it’s partially right.

But as someone who breaks AI agents for a living, I think the author missed something important. Let me explain.

Where Mythos Is Right

Let’s give credit where it’s due. The author correctly identifies several key truths:

- ✅ AI needs visibility — It’s not magic. AI finds vulnerabilities where it can see code.

- ✅ Open source components are high risk — Public code = AI can scan it 24/7.

- ✅ Proprietary internal systems are safer (for now) — If nobody sees your binary, AI can’t analyze it.

- ✅ Focus on dependencies — Most breaches come from components you didn’t write.

Where Mythos Misses The Mark

The author assumes that “external attack techniques” means traditional fuzzing. But that’s not where the real leap is happening.

Here’s what Mythos missed:

| What Mythos Focuses On | What’s Actually Changing Faster |

|---|---|

| Binary fuzzing | Agentic API exploration — AI that talks to your endpoints like a human pentester |

| Static code analysis | Prompt injection & jailbreak discovery — AI finding ways to make your agent misbehave |

| Reverse engineering | Business logic abuse — AI understanding workflows and finding the edge cases |

| Source code access needed | Black-box agentic testing — AI doesn’t need your code. It just needs your API. |

⚠️ The blind spot: AI doesn’t need your source code to break your system. It just needs your API endpoint and a clever prompt. Agentic testing is the leap Mythos overlooked.

The Real Attack Surface (According To Someone Who Does This Daily)

In my work pentesting AI agents and APIs, here’s what I’m actually seeing:

The Connection To Your AI Agent

If you’re building an AI agent — something that takes user input, calls tools, and takes actions — here’s what this means for you:

- 🔴 Your prompt interface is public — AI doesn’t need your source code. It just needs to talk to your agent.

- 🔴 Tool definitions are guessable — Even if your code is private, AI can infer what tools you have by how your agent responds.

- 🔴 Business logic is discoverable — AI can explore your agent’s behavior systematically and find the edge cases you missed.

- 🟡 Your dependencies are still a risk — Mythos is right about this. Audit your open source components.

What You Should Actually Do

Based on both Mythos’s insights and my own experience breaking AI systems:

- Audit your dependencies — Mythos is right. Open source components are low-hanging fruit for AI scanners.

- Test your API endpoints — AI agents can probe your API faster than any human. Make sure it holds up.

- Red-team your prompt interface — Can an attacker jailbreak your agent? Can they make it call the wrong tool?

- Assume your business logic is visible — AI can reverse-engineer workflows through exploration. Build accordingly.

- Don’t rely on obscurity — Private code is not a security strategy. Defense in depth is.

My Take On The Mythos Debate

The author is smart. The analysis is thoughtful. But the frame is too narrow.

Yes, AI vulnerability discovery has leaped forward in code analysis and reverse engineering. But the real game-changer for most businesses isn’t binary fuzzing — it’s agentic black-box testing. AI that explores your system like a human would, but 1000x faster.

That’s what I do. That’s what I see every day. And that’s what Mythos missed.

🔮 Prediction: Within 18 months, the gap between “internal” and “external” AI vulnerability discovery will shrink dramatically. Agentic testing will be the new standard. The question isn’t if — it’s whether you’re ready when it arrives.

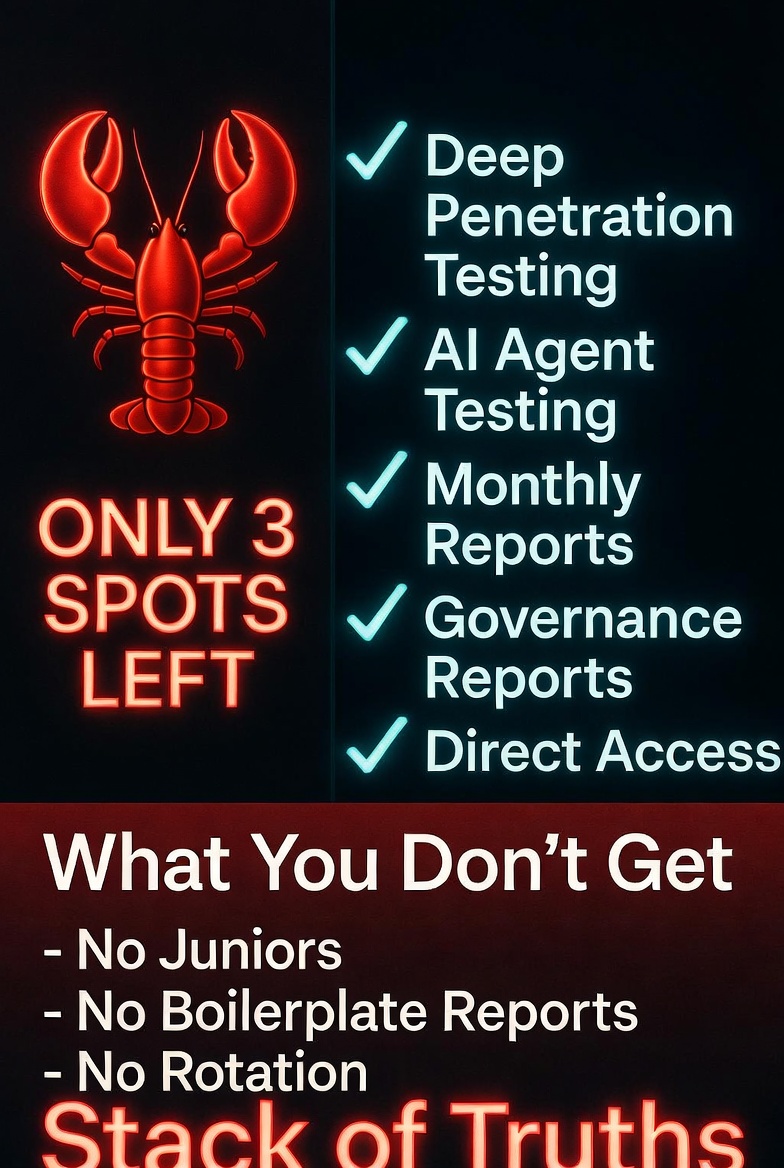

Let me test your AI agent before the bad guys do

AI agent pentesting. Prompt injection. Tool call abuse. API exploitation. I find what automated scanners miss — and what Mythos didn’t consider.

📩 DM @StackOfTruths on XFree 15-min consultation. No hard sell. Just honest answers about your AI agent security.

Leave a Reply